Most human performance & health environments have an interesting dichotomy of what is actionable versus what is interesting. So the debate of simple versus raw data has risen to be a significant contention within the realm of performance and medicine.

Actionable data can be defined as variables that are simplified enough to guide decisions immediately by the practitioner. The most fast-paced operational environments take it a step further; simplifying the data means it can be actionable enough for the measured individual without a trained or certified practitioner’s interpretation. We will refer to this measured individual as the end-user, a warfighter, an athlete, or even a patient.

So, if we know actionable data is something immediately actionable by anyone, how do you present the information to be the most actionable? Actionable data is often presented as normative values, such as percentiles or T scores, that present the raw data’s value on a bell curve, 50 being average. Such normalization answers the question of how you compare against others. For example, a running back with below-average explosiveness (i.e., a T score of 40) obviously needs to focus on explosiveness because their position requires this movement quality. If raw data is presented in this scenario of 4234.5 Newtons per second, the end-user is unaware if that is good or bad. Even if the practitioner is aware of its significance, the raw data leaves out the further context of its inherent reliability or consistency as a metric. So the best normalizations also include standard deviations in their calculation. T scores are the individual’s performance compared to the group, divided by Standard Deviation, limiting the variable’s normal fluctuations (noise) inherent in any human analysis.

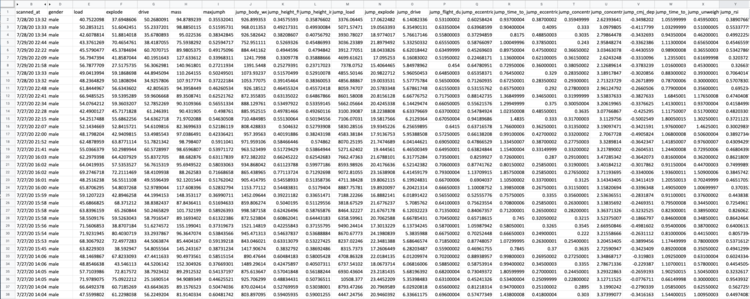

However, actionable data alone can be limiting for future innovation. Interesting breakthroughs often happen by a deeper analysis of raw data from trained scientists. An environment of small ratios of end-users to practitioners allows more time from the organization for deeper analysis. The challenge is that this route of raw data analysis requires significant time to filter the raw time series data (thousands and thousands of data points) into a meaningful calculation. For example, a rate of force is not just subtracting point A from point B and dividing by time – it is the force-time integration of thousands of time slices. The time required for raw variables is perhaps even longer to build up enough trends to know if this raw data is even meaningful or actionable. This investment of time jeopardizes the time committed from relationship and actionability between the end-user and practitioner. What else could be taught or focused on rather than the practitioner spending all of this time analyzing?

Perhaps the best environment for raw data variables is at an organization with many full-time data scientists that can filter, analyze, and present the raw data into their own version of simplified data. We often see this situation in a purely academic setting, where the mission is to publish new findings or validate existing protocols. Related to this validation is another value of raw data; the comfort that a sensor or technology is calculating the simplified variables correctly. Having just actionable, simplified variables can mask calculations that could use improvement…are the metrics even reliable and consistent enough to use?

So the answer is that both simple and raw data are valuable, yet for different reasons depending on the environment, personas involved, and resources available (i.e., time, budget, staff, to name a few). With the rise of machine learning and artificial intelligence, practitioners have to adapt to the idea that not everything is as simple as it seems. Large excel spreadsheets from singular organizations can never provide the same statistical power and significance as a multi-organizational platform performing time-series analysis in a dynamic fashion.

At Sparta Science, we leverage machine learning to continually improve the actionable data we present to both the practitioner and end-user, while also making raw data openly available should the situation warrant its usage.

Like any profession and industry, choices around data are made to achieve the best outcome. So, in this case, we should all be united in one approach; how does the end-user operate at a higher and healthier level?