In Part I of this series, we discussed the different things to look for in the design and methods of a research study to make sure you are looking at quality research. By simply looking at the type or size of the population of the study you should be able to immediately dismiss studies that should not be applied to your situation. We also discussed the importance of reliability, validity, and choosing applicable statistical models to run on the collected data. Now we will continue by looking at how to interpret the results.

After the statistical models are ran, the interpretation of these results also need to make sense. Most research will present a variety of different statistical information so being able to interpret these values is extremely important.

The distribution of a data set shows all of the possible values of the data and how often they occur. T scores and Z scores are both standardized values on a distribution to show the distance a value is from the mean and they are both interpreted the same. Z scores are from the perfect normal distribution or bell curve. T scores are from a normal curve that is a little more flat. Usually because of the lack of sample size. Each T score or Z score has P value associated with that.

The P value is the probability that a point on that part of the distribution is actually associated with that distribution (AKA did not occur by random chance). Significance is a classification of what probability is acceptable, usually determined by an expert in the field and often set at .05. If the P value is less than the set P value (.05) then it is classified as significant and suggests there is a significant difference between values being compared. Notice that with a significance level of .05 suggests there is still a 1 in 20 chance that there is no difference.

The effect size is a very simple way of representing the difference between two groups. Effect sizes are often reported along with P values to show the size of the difference along with the significance. It is important to look at effect sizes as often we are presented with data that is statistically significant, but practically insignificant.

R represents correlation, or is often called the correlation coefficient. It is a measurement of association between -1 and 1. Close to 1 is a positive correlation. If A and B have a positive correlation then as A increases B also increase. Close to -1 is a negative correlation. If A and B have a negative correlation then as A increases B decreases. For example, some of our partners have found a positive correlation between minutes played and the EXPLODE variable from our Sparta Signature. Always remember, correlation DOES NOT MEAN causation.

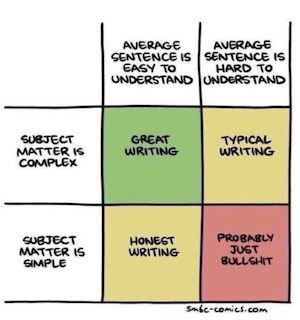

Remember good data is the foundation to good research. Most mistakes in research stem from bad or incomplete data. Just because research is available or published, doesn’t always mean it is good research. Practitioners need to take initiative when reading articles, research, blogs and other information to make sure they understand what they are reading.

Understand the research methods:

Know the data set: population, duration, sample size, characteristics.

Be sure all measurements used are both reliable and valid.

Know whether the research is controlled or observational. The latter is more common but the former more powerful.

Understand the results:

Understand the statistical model used.

Know how to interpret the results. Correlation does NOT mean causation.

P values should be presented to show significance, and effect sizes shown.

If all of these are explained and easily understood in the paper then the research is probably good research. As a practitioner, reading and understanding research should be a part of your routine. It is important to look for practical findings that can help your athletes, but first and foremost it is critical to be able to identify good research.